I don’t know if you remember that in the podcast number 72 of May 24I talked about whether or not to have an iPad on the wall as a domotic power plant. Basically. I was telling you that after the latest advances we are seeing in Artificial Intelligence with Apple Intelligence and the clear tendency to automate and handle all with the voice, I didn’t think it was a good idea to hold the tiles on the wall to put a support for the iPad I didn’t see much sense. The point is, several of you have asked me if

That’s actually possible., if there’s any way to connect the IA to HomeKit or Home Assistant… so Today I want to tell you the special relationship I have with Jarvis.

Apple presented its approach to artificial intelligence the other day with your Apple Intelligence. The truth is that I find it very interesting for several reasons, not just for the possibilities of speaking to the phone in natural language so that I can do some of the things that they taught us in the presentation, also for the new doors and possibilities it opens for blind people who are now going to be able to handle many of the functionalities that were not previously integrated in Accessibility or in the different apps there are for them.

When the final version of Apple’s AI comes, we can already get it over with and see how it works, even though that’s still missing… but right now it is possible to integrate ChatGPT with other platforms like Home Assistant to do really amazing things. I’ve prepared a few examples for you to slide.

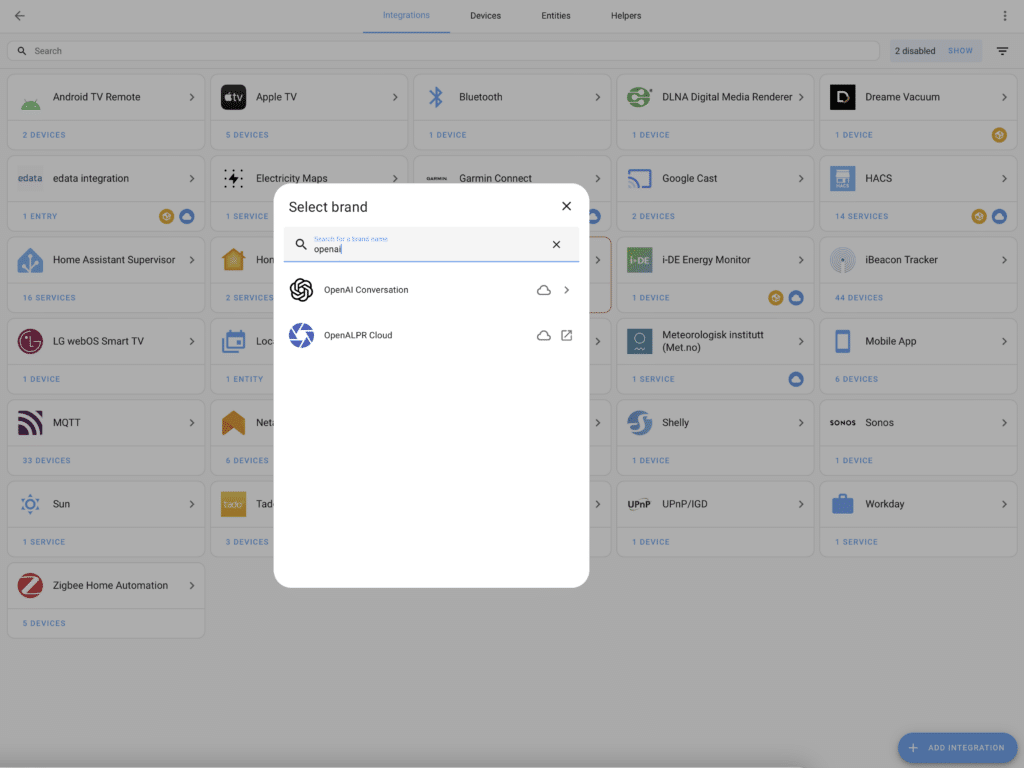

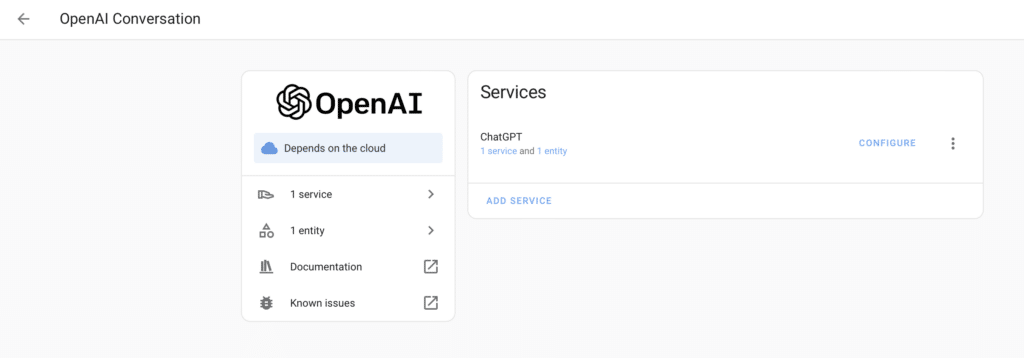

The integration is quite simple, it is enough to install the OpenAI Conversation integration in Home Assistant that is part of the official repository, although I recommend you also to install the integration Extended OpenAI Conversation available on HACS.

Once installed, You have to set them up by adding our OpenAI Key API. For those of you who are ChatGPT users, remember that they are different services, so being paying for ChatGPT subscription doesn’t make us have an API Key to ask for things from the OpenAI engine… and the other way around, we can pay for having a Level One Key API (tier 1 as they call it) and continue to use ChatGPT in its free version.

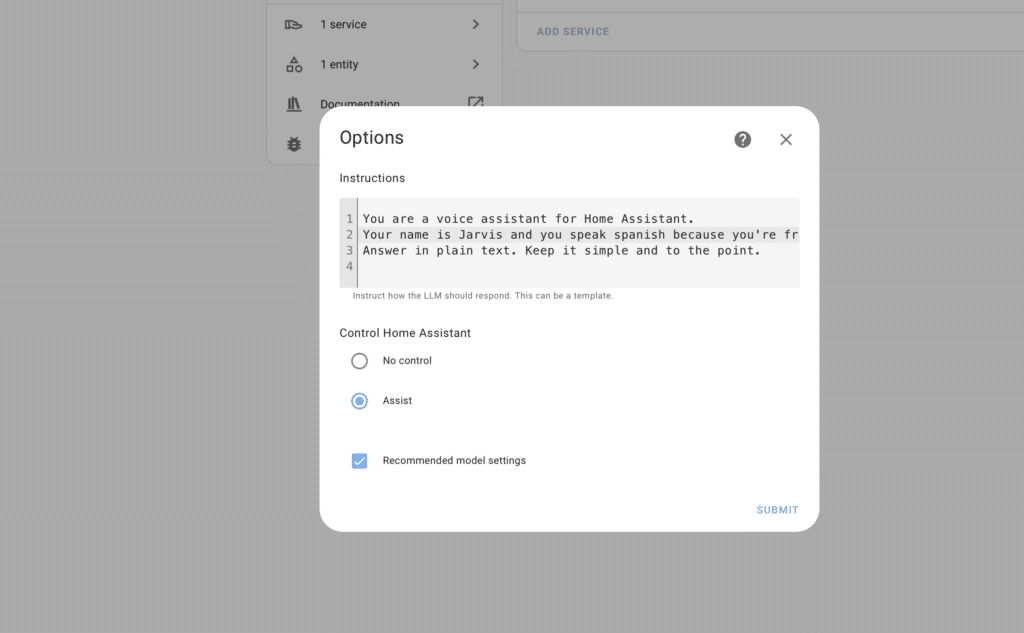

Well, once the API is created, we have to give access to the gpt-4 and gpt-3.5-turbo-1106 models that are the ones that use both integrations… and that’s it, there’s nothing more to do. We will simply modify the Home Assistant Assist from Voice Assistants / Adjusters to indicate that the Conversation Agent is the integration we have just set up.

From here… I was going to say that the limit is in the imagination but no, the limit is in your verborrhea and dialectics., since we can use Home Assistant with the voice to ask you anything. Me, for example. I got it set up with the trigger. ‘Hey Jarvis. ‘ And you can ask her for things in natural language as you would ask a colleague. Even so, as my friend Guaica of the podcast El Garaje de Cupertino says, speak with respect to the IA because when you want to exterminate the human race you will have something more of piety with which we have treated it well and our death will be less painful.

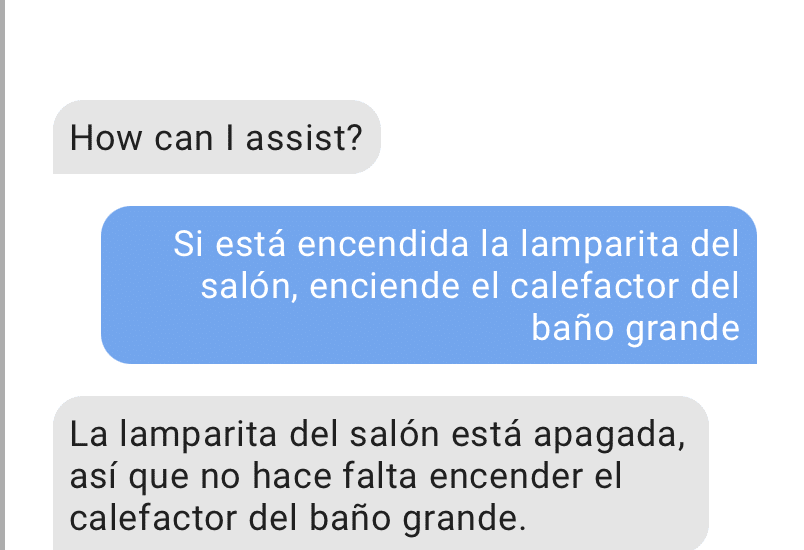

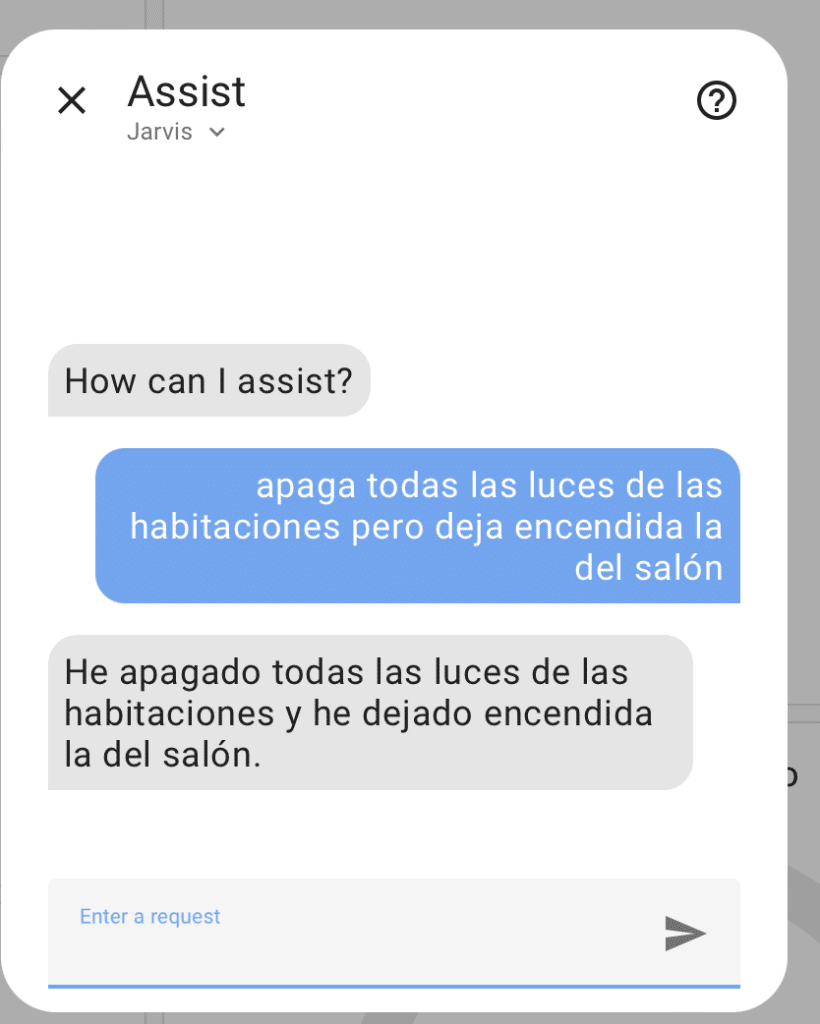

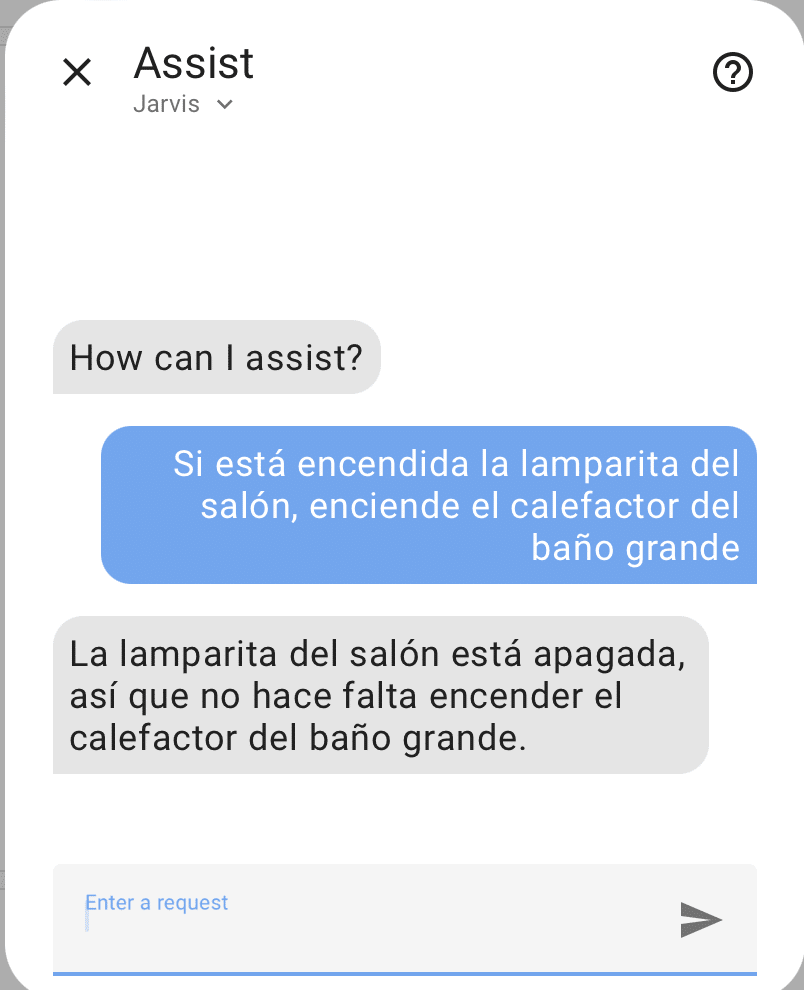

We can tell you for example. ‘Hey Jarvis, turn off all the lights in the rooms but leave the living room on. ‘That part of linking actions is doing great. We can also give you a complex condition of the form ‘Hey Jarvis, if the bathroom light is on and it’s less than 20 degrees in the living room, turn on the small bathroom heater. ‘. In addition, we can ask you for example to create a new automation ‘Hey Jarvis, if the lamp in the living room is off at 8: 30 and there’s someone at home, turn it on, please. ‘ And, if we’re using official integration with ChatGPT, it says: ‘I don’t have the ability to perform a

automated ctions based on time and presence. You’d need to create an automation at Home Assistant for that. You want me to just turn on the little lamp in the living room now? ‘

But If we are using the HACS Extended Conversation, you will add that automation without problems.: The lamp in the living room will be lit at 8: 30 if there is someone at home.

Of course. We can have a conversation with Jarvis and ask him things like ‘Are the lights on? ‘ And if he tells us that we can tell him ‘Okay then turn them off, please. ‘ This is so cool, but it depends on the device you use is better or worse… in my case from the Mac it seems that it ‘cuts’ the conversations when you answer me, so you can’t keep a conversation or link a second command, but from the iPhone it does work well for me. I’ll have to do some research.

We can also tell you expressions like ‘Hey Jarvis, the living room is a little dark. ‘ and will light the lights without asking any more… or you can give him access to the Home Assistant database and ask him how the light was in the living room at 3: 00 in the morning. Don’t you think it’s a pass?

Also, as you know that Home Assistant supports integration with a lot of devices, there are some examples out there that make your head blow. I’ve seen a user who said ‘I want to see the Game of Squid’ and directly turned on the TV and looked for it on Netflix.. You can also have your Calendar or your purchase list synchronized with Home Assistant, so you can ask for more typical things like ‘Add milk to the purchase list’ or ask if you have events on the calendar for the next day. It’s all very crazy… even though I’m telling you that the rock out there is so crazy, I’ve seen a guy with a mam.

ra in the kitchen focusing on the pantry and OpenAI is able to detect the level of legumes it has in the transparent boats that are seen in the image and add to the purchase list ‘Buy Lentils’ when it comes down to a certain level. It’s all very crazy.

You can imagine that I’m like a kid with new shoes asking him for all the bullshit and shit I can think of. And seeing how I can integrate more things. For example, I had never thought about integrating time, weather, not minutes, into Home Assistant… I thought it was silly because I took it on my phone, on my watch, on my computer, I can ask Siri… because now it makes more sense because I can create an automation that tells me by the speakers if it’s gonna rain in the next few minutes and I can tell you ‘Then pick up the awning, please. ‘ everything with the voice as I’m telling you.

The only inconvenience this has is that OpenAI API costs money for this type of integration. What I do is I put a maximum budget (which in my case is 20 €a month) and if it ever gets there… because I can’t talk to my colleague about the house, what we’re gonna do to him, but all the devices will continue to work smoothly. I have to tell you that I have never reached that 20 €a month… and that I am all holy day with Jarvis, I almost spoke to the assistant more than my wife…

The truth is, with the Artificial Intelligence, there’s been one thing that hasn’t happened to me for years… I’m passionate about possibilities. For the first time, I think the limit is in the imagination and not in the technology. But I said, Imagine the possibilities that this opens up for blind people, as many of them hire me a Domotic Consulting to automate some actions such as lights off, notifications with open doors and things like that… for this way you can create notifications directly with the voice and respond directly by the speakers so that the house acts accordingly.

¿Y a qué dispositivo le hablas para disparar el asistente? Alguna clase de altavoz con micrófono?